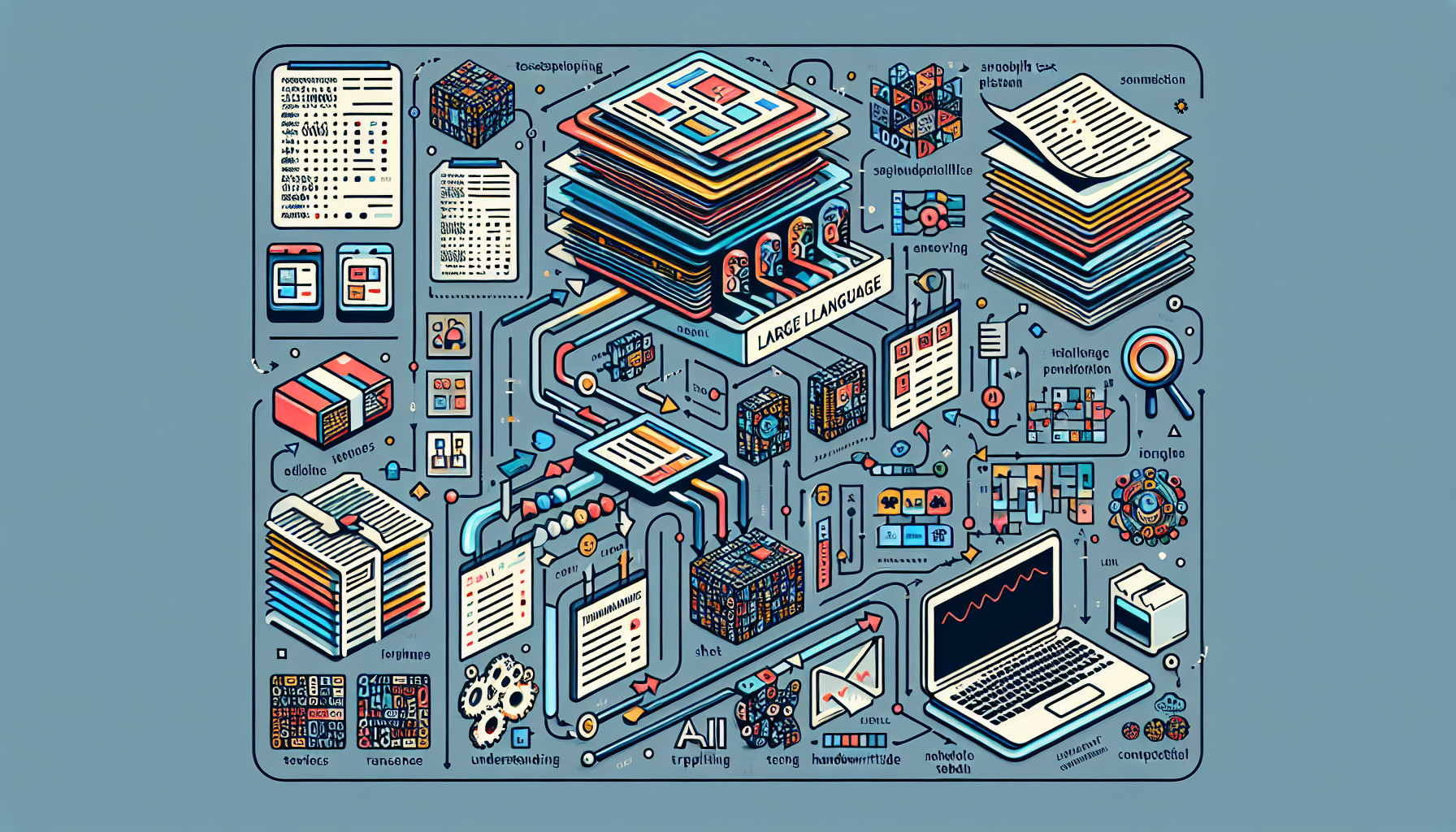

Large language models (LLMs) and Vision AI are redefining document processing across industries. Traditional OCR systems, while useful for basic extraction, struggle with context, layouts, and handwriting. LLMs bring contextual understanding, enabling enterprises to process invoices, compliance documents, or handwritten forms at scale with far greater accuracy. This article explores how modern AI outperforms legacy approaches and what it means for financial workflows, auditing, and operations teams.

The Power of Large Language Models in Document Processing

LLMs excel at interpreting documents holistically. They understand sections, tables, narratives, and implied relationships, producing structured outputs ready for downstream systems. Instead of building one-off models for each document type, organisations can deploy general-purpose LLMs that adapt quickly to new layouts or languages. This flexibility drastically shortens implementation cycles.

Beyond OCR: Context-Aware Understanding

OCR extracts text but lacks context; it treats words as disconnected entities. LLMs evaluate sentences, headings, and metadata together, recognising intent and nuances. For example, they can distinguish between "amount due" versus "tax amount" even if the layout changes. They also detect anomalies—missing signatures, inconsistent totals, or conflicting clauses—flagging items that require review.

Processing Handwritten and Scanned Documents

Many compliance-heavy workflows rely on handwritten registers or scanned PDFs. LLM-infused Vision AI models combine handwriting recognition with contextual reasoning, converting rough scans into structured tables. This capability is invaluable for sectors like manufacturing, banking, and logistics where legacy paperwork persists.

Automated Cross-Verification and Validation

AI agents compare information across multiple documents—bank statements, attendance logs, invoices—to confirm consistency. They identify duplicate records, mismatched numbers, or missing attachments. By automating these checks, teams shorten audit cycles and reduce human error, while maintaining detailed evidence trails for regulators.

Cost-Efficiency and Scalability

General-purpose LLMs process thousands of pages at a fraction of the cost of bespoke models. Cloud deployment ensures elastic scaling, allowing organisations to handle seasonal spikes or onboarding surges without expanding headcount. Coupled with RPA, the output can feed directly into ERPs, CRMs, or compliance systems.

The Future of Document Processing with AI

LLMs continue to improve with multimodal capabilities—combining text, images, and even voice. We’ll see tighter integration with analytics platforms, enabling instant insights from contracts or field forms. Low-code tooling will empower business teams to configure new document flows without waiting months for IT implementation.

Conclusion

AI-driven document processing delivers accuracy, speed, and compliance-readiness that traditional OCR cannot match. By adopting LLM-based solutions, enterprises convert unstructured PDFs, scans, and handwriting into machine-ready data, unlocking automation opportunities across finance, audits, operations, and customer onboarding. The result: faster decisions, reduced manual effort, and resilient workflows.